Article of the Month - July 2019

|

A Benchmarking Measurement Campaign in

GNSS-denied/Challenged Indoor/Outdoor and Transitional Environments

Allison KEALY, Australia; Guenther

RETSCHER, Austria; Jelena GABELA, Australia; Yan LI, Australia; Salil

GOEL, India; Charles K. TOTH, U.S.A.; Andrea MASIERO, Italy; Wioleta

BŁASZCZAK-BĄK, Poland; Vassilis GIKAS, Greece; Harris PERAKIS, Greece;

Zoltan KOPPANYI, U.S.A., Dorota GREJNER-BRZEZINSKA, U.S.A.

This article in .pdf-format

(19 pages)

This article is a peer reviewed paper, presented at

the FIG Working Week 2019 in Hanoi, Vietnam. The paper received the

navXperience award given to the best peer review paper within the area

of positioning and measurement (FIG Commission 5).

Key words: cooperative positioning, indoor

positioning, indoor-outdoor smooth transition, sensor integration,

vehicle and pedestrian navigation

SUMMARY

This paper reports about a sequence of extensive experiments,

conducted in GNSS-denied/challenged, indoor/outdoor and transitional

environments at The Ohio State University as part of the joint FIG

Working Group 5.5 and IAG Working Group 4.1.1 on Multi-sensor Systems.

The overall aim of the campaign is to assess the feasibility of

achieving GNSS-like performance for ubiquitous positioning in terms of

autonomous, global, preferably infrastructure-free positioning of

portable platforms at affordable cost efficiency. Therefore, cooperative

positioning (CP) of vehicles and pedestrians is the major focus where

several platforms navigate jointly together. The GPSVan of The Ohio

State University was used as the main reference vehicle and for

pedestrians, a specially designed helmet was developed. The

employed/tested positioning techniques are based on using sensor data

from GNSS, Ultra-wide Band (UWB), Wireless Fidelity (Wi-Fi), vison-based

positioning with cameras and Light Detection and Ranging (LiDAR) as well

as inertial sensors. The experimental schemes and initial results are

introduced in this paper. The results from the experimental campaign

demonstrate performance improvements due applying CP techniques.

1. INTRODUCTION

Localization in indoor and obscured GNSS (Global Navigation Satellite

Systems) environments remains one of the challenging research problems.

Cooperative positioning (CP) or localization (CL) has been demonstrated

to be extremely useful for positioning and navigation of mobile

platforms within a neighborhood. CP, however, is still based mainly on

GNSS with sensor augmentation using inertial sensors. In challenging

GNSS-denied or combined indoor/outdoor environments, the use of

alternative positioning technologies is required (see e.g. Alam and

Dempster, 2013; Kealy et al., 2015). This paper investigates the use of

Ultra-wide Band (UWB), Wireless Fidelity (Wi-Fi), vison-based

positioning with cameras and Light Detection and Ranging (LiDAR)

technologies as alternative and complementary techniques for

augmentation. A benchmarking measurement campaign was carried out at The

Ohio State University in October 2017. In the experiments, vehicles and

pedestrians navigated jointly together to achieve CP ubiquitous

positioning (see e.g. Kealy et al., 2011; Retscher and Kealy, 2006),

including seamless transitions between indoor/outdoor environments. The

experimental schemes and characteristics are summarized, and initial

results are presented in this paper.

2. SEAMLESS INDOOR-OUTDOOR COOPERATIVE

LOCALIZATION FOR PEDESTRIANS

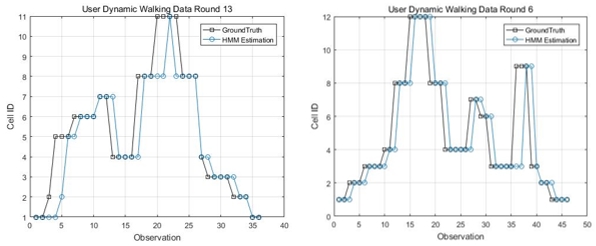

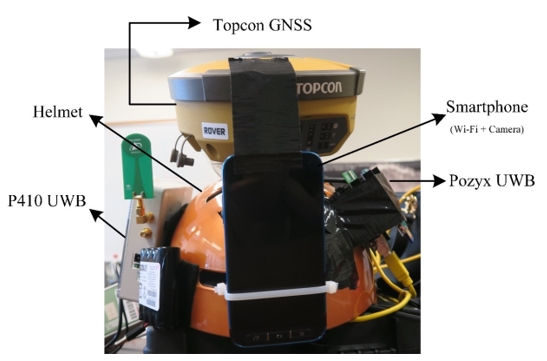

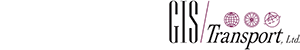

In the experiments, we develop a cooperative system comprising of

four pedestrians using an integration of sensors such as UWB, GNSS,

Raspberry Pi, Wi-Fi and camera, with the objective of achieving precise

positioning in indoor environments, as well as providing a seamless

position transition between indoor and outdoor environments. An overview

of the sensors used in the proposed system is shown in Figure 1. These

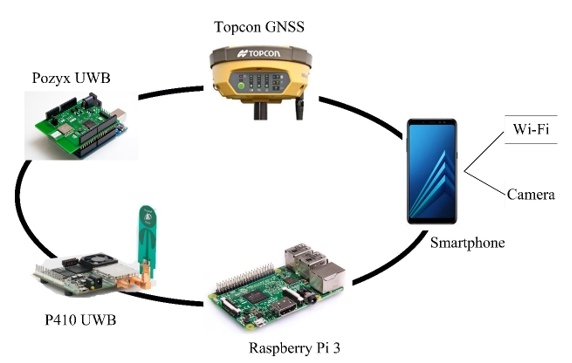

sensors are installed on a helmet that could be worn by a pedestrian.

One of the helmets (with installed sensors) is shown in Figure 2. Three

of the four such helmets developed in this research are shown in

Figure 3.

Figure 1: Overview of the sensors integrated on one of the pedestrian

helmets in the developed system.

Figure 2:

Sensors installed on a helmet.

Figure 3: Three of the four helmets developed in this research.

In outdoor environments, the positioning solution is derived

primarily from GNSS and relative range observations among pedestrians.

In indoor and transition environments, the localization solution is

estimated using relative range observations among pedestrians, camera

observations, and Wi-Fi RSS (Received Signal Strength) measurements. In

these experiments, four pedestrians start from outdoor environments

where GNSS observations are available to all pedestrians. In addition, each pedestrian is observing relative range

measurements to other pedestrians. All the pedestrians then transition

from outdoor to indoor environments and thus, each pedestrian starts to

lose GNSS signals successively. Once all pedestrians are indoors, GNSS

observations are not available to any of the pedestrians. In such

conditions, pedestrians rely on relative UWB ranges (including ranges

between pedestrians, and ranges between pedestrian and anchors, i.e., a

set of static devices, fixed on constant positions), Wi-Fi measurements,

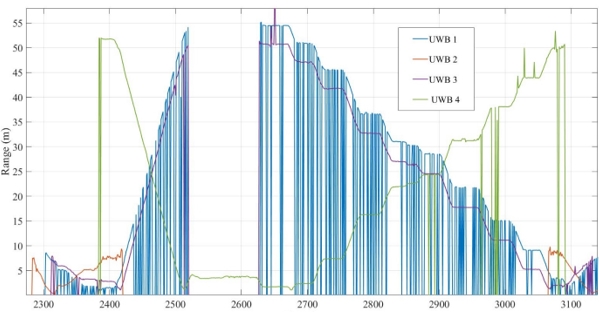

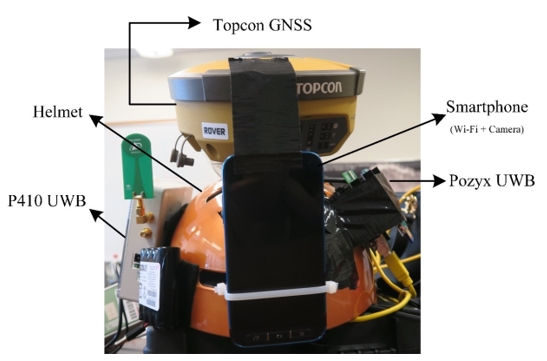

and camera observations, for localizing all users cooperatively. A total

of 18 UWB range observations either between pedestrians or between

pedestrian and static anchors are available for localization in indoor

and transition environments. A plot of range measurements as observed by

a pedestrian with respect to four UWBs as a function of time is shown in

Figure 4. It is seen that a maximum range of at least 60 m is achievable

in indoor environments. At certain instants, for example between 2500 to

2600 seconds x 100, significant outages in the UWB communication are

observed. This is most likely due to non-availability of direct line of

sight between the two UWBs. At time instants between 2700 and

3100 s x 100, recurring communication outages (for UWB 1) are observed.

Further, it is observed that UWB ranges are corrupted by outliers that

are likely because of multipath in indoor environments. Such outliers

should be accounted for, within the cooperative state estimation

framework.

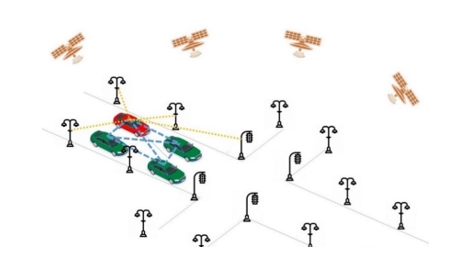

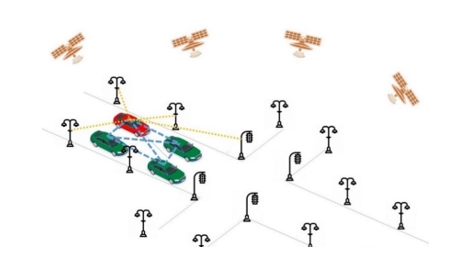

3. COOPERATIVE OUTDOOR VEHICLE POSITIONING

As a part of this campaign, a set of outdoor data was collected. The aim

of the data collection was to provide data for further research on

navigation and integrity monitoring solutions for Intelligent Transport

Systems (ITS) in urban environments. The outdoor tests included multiple

platforms and an extended sensor configuration, as for quality and for

supporting image based navigation, multiple LiDARs and a range of still

and video cameras were used. The platforms included four vehicles, two

cyclists and pedestrians sharing the same road section, and performing

various motion patterns. These experiments were planned with challenges

of urban environments (e.g. GNSS unavailability, bad satellite geometry)

in mind, as well as the inadequacy of sensor fusion of Inertial

Measurement Unit (IMU) and GNSS for certain applications of ITS. An

ad-hoc CP network was set up to be independent of GNSS and to enable

collection of redundant measurements.

Figure 4: Plot of range observations from 4 UWBs with time.

A total of 16 points were set up as static infrastructure nodes.

Infrastructure nodes were equipped with Time Domain P440 and P410 UWB

radios for relative ranging. This allowed vehicles to communicate with

infrastructure and position themselves based on the known position of

infrastructure nodes and measured relative ranges between them. That

defines the Vehicle-to-Infrastructure (V2I) CP. To allow for

communication between the four cars, every car was equipped with P410

UWB radios. With every car sharing its position and relative range to

the other cars, Vehicle-to-Vehicle (V2V) CP was enabled. This set-up is

shown in Figure 5. Every car was equipped with survey-grade GNSS

receiver and one UWB radio for V2V CP. Given the limited number of

available sensors, only one vehicle was equipped with additional UWB

radio for V2I CP and IMUs (H764G1 and H764G2 Honeywell, 3DM-GX3-35

MicroStrain).

Figure 5: Experimental set-up of V2V and V2I CP.

The datasets were collected in an open sky environment, which enabled

simultaneous collection of ground truth. Further, this experiment

consists of two different tasks. The first part of the experiment aimed

to collect the data when the cars are driving in different formations

along the lane (Figure 6). The second part of the experiment was focused

on intersection level positioning were the cars were performing

different operations at intersections (Figure 7). These two sets of data

provide an opportunity of further research on optimal CP network

geometries given a specific ITS application requirements (integrity,

accuracy, continuity, availability).

Figure 6: Lane level experiment. On the left: map of the trajectory for

1 car. On the right: a photograph of the data collection process and the

experimental set-up on field.

Figure 7: Intersection level experiment. On the left: map of the

trajectory for 1 car. On the right: a photograph of the data collection

process and the experimental set-up.

Figure 7: Intersection level experiment. On the left: map of the

trajectory for 1 car. On the right: a photograph of the data collection

process and the experimental set-up.

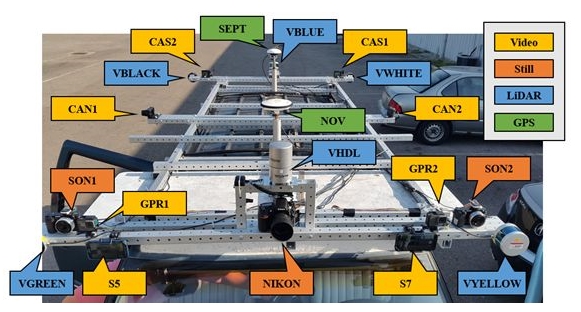

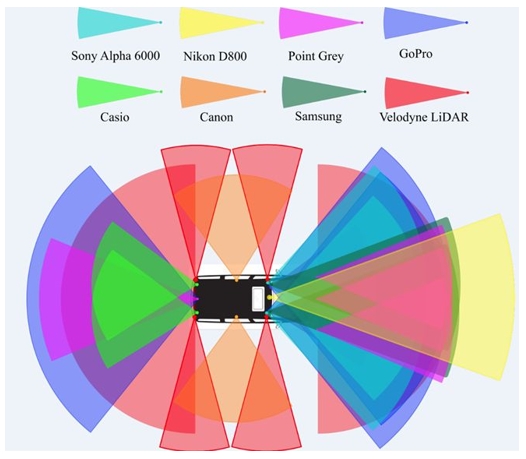

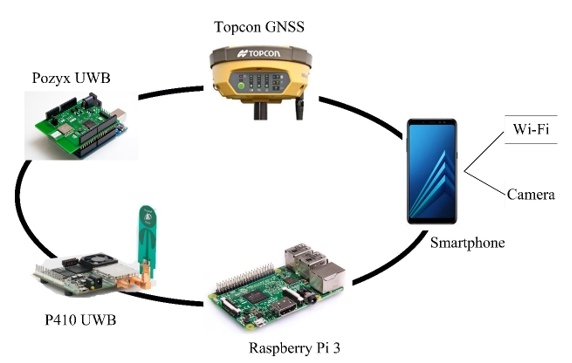

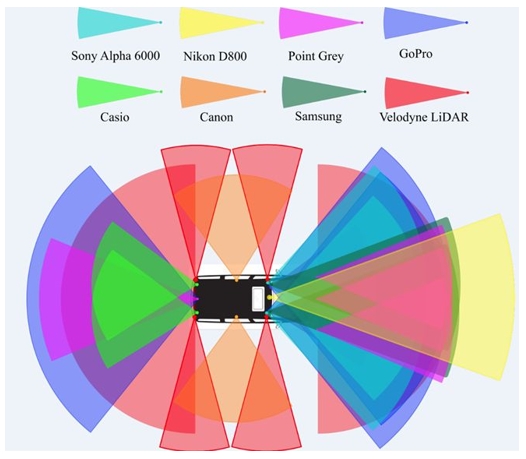

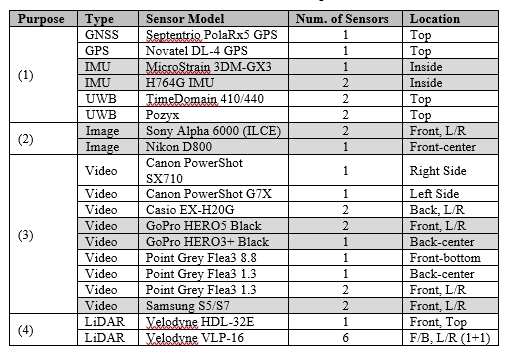

3.1 The Reference Vehicle (GPSVan)

A GMC Suburban customized measurement vehicle, called GPSVan

(Grejner-Brzezinska 1996), customized for autonomous vehicle research

(Toth et al., 2018; Koppanyi and Toth, 2018) was used for the data

acquisition, see Figure 8. The navigation sensors, GPS/GNSS receivers

and IMUs are located inside the van. A light frame structure installed

on the top and front of the vehicle provides a rigid platform for the

antennas and UWB units, and imaging sensors, such as LiDAR and different

types of cameras. The sensor configuration used during the data

acquisition consists of two GPS/GNSS receivers, three IMUs, four UWB

transmitters, three high-resolution DSLR cameras for acquiring still

images, 13 P&S (Point and Shoot) cameras for capturing videos, and seven

mobile LiDAR sensors, see Table 1. The four primary purposes of the

various sensors are categorized as:

- Georeferencing and time

synchronization: GPS/GNSS, UWB and IMU sensors provide accurate time as

well as position and attitude data of the platform, allowing for sensor

time synchronization and sensor georeferencing (Kim et al., 2004).

- Optical image acquisition:

these sensors are carefully calibrated and synchronized in order to

provide accurate geometric data for mapping; for instance, by using

stereo, multiple-image photogrammetric and computer vision methods

(Geiger et al., 2011).

- Video logging: these

sensors provide a continuous coverage of the environment during the

tests. The quality of these sensors does not allow for accurate time

synchronization and calibration, applied to high quality still image

sensors. Nevertheless, the moderate geometric accuracy combined with the

high image acquisition rate allows for efficient object extraction and

tracking of traffic signs, road signs, and obstacles, etc.

(Maldonado-Bascon et al., 2007; Greenhalgh and Mirmehdi, 2012). In

addition, dynamic objects, such as vehicles, cyclists, pedestrians,

etc., can be tracked.

- 3D data acquisition:

Velodyne LiDAR sensors allow for direct 3D data acquisition that can be

used for object space reconstruction, and object tracking (Azim and

Aycard, 2012; Jozkow et al., 2016).

GPS/GNSS, UWB and IMU sensors provide accurate georeferencing of the

platform, and accurate time base for the time synchronization. Antennas

located on the top of the GPSVan deliver the GPS/GNSS signals to

multi-frequency receivers located inside the vehicle. The Septentrio

PolaRx5 receiver with PolaNt-x MC antenna (SEPT) is a state-of-the-art

multi-constellation system that supports data logging of multi-frequency

signals at high temporal resolution (Septentrio, 2018). The GPS, a

Novatel DL-4 with Novatel 600 antenna an older model is primarily used

for time synchronization and backup positioning sensor. The GNSS data is

post-processed with DGNSS (using phase measurements) technique. The

positioning accuracy of the post-processed GNSS data is at

centimeter-level for open-sky areas. However, at several areas at the

OSU campus, the positioning accuracy is lower due to the limited clear

line of sight to the satellites; urban-canyon effect. An UWB network was

installed in the test area, providing UWB positioning for the testing.

Figure 8: The top view of the GPSVan and field of views of the imaging

sensors.

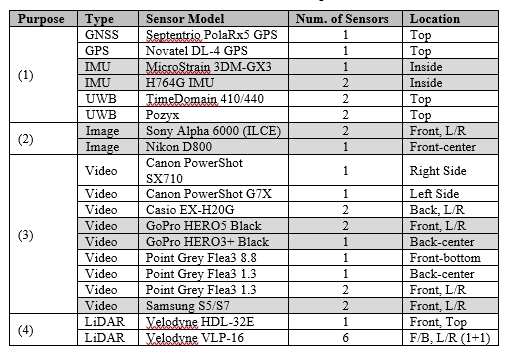

Table 1. Overview of the sensors; see explanation in the text.

The IMU sensors provide attitude data for the georeferencing, and are

also used for obtaining navigation solution during GPS/GNSS-outages. Two

types of IMUs were used during the data acquisition. H764G is a high

accuracy navigation-grade IMU. Two of these sensors are located inside

the platform, however only the H764G-1 is used during the

post-processing, and fused with the SEPT GPS in a Kalman filter to

derive the navigation solution. The MicroStrain 3DM-GX3 sensor is a

lower-grade IMU which is used for sensor performance comparison.

The utilized cameras can be divided into two groups according to their

capabilities and operating modes. The first group includes the DSLR

cameras. These cameras captured still images with high resolution but

with low sampling frequency (0.5-1 Hz). Due to the low temporal

resolution, the main usage for these cameras is to provide

high-resolution images for deriving accurate geometric data; these

cameras are well-calibrated and precisely synchronized to the UTC

reference time system. In the other group, the cameras captured images

in video mode, and thus, the environment is recorded with high temporal

resolution, but at lower image-resolution. These cameras are not

rigorously calibrated and synchronized. These data streams can be used

for real-time scene understanding, image interpretation, obstacle

detection or tracking. The various types of sensors allow for

performance comparison of the imaging capabilities of the different

sensors.

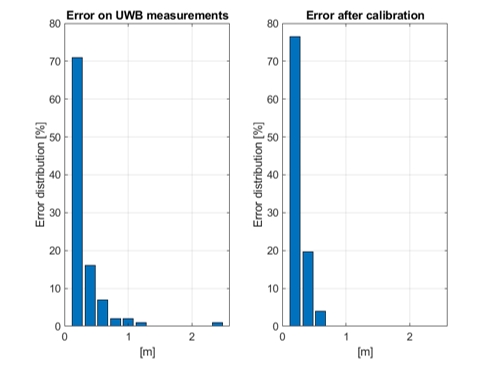

3.2 Ultra-Wide Band Ranging

An UWB-based positioning system is usually formed by a set of static

devices, fixed on constant positions (anchors), and a set of moving ones

(rovers). When anchor positions are known a priori, the system typically

ensures positioning with errors at decimeter-level. Despite this level

of accuracy is sufficient for several applications, the potential of the

system shall be higher. Indeed, UWB range measurements are usually

characterized by a random error at centimeter-level and by a (typically

larger) systematic error, which depends on the environment (e.g.

multipath) and on the configuration of the UWB devices.

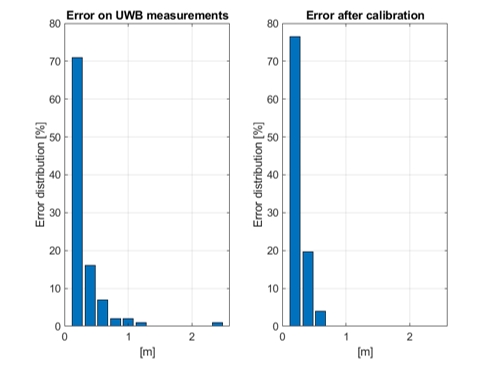

The experiment aims at investigating the possibility of calibrating the

UWB system in order to compensate for the effects of the static parts of

the environment on UWB measurements, hence obtaining an improvement of

the overall positioning accuracy. To this aim, 14 Pozyx and 14

TimeDomain UWB anchors were fixed on the walls along a corridor in one

single floor as well as in the staircase in the Bolz Hall building of

the Ohio State University, and calibration and validation range

measurement datasets were collected by a rover on 35 checkpoints along

the corridor, see Figure 9.

Figure 9: Positions of the checkpoints along the considered corridor.

Preliminary results were obtained by considering a very simple

calibration model, where for each checkpoint the range error measured

during calibration was considered as the bias to be removed during

validation on the same checkpoint. Figure 10 shows the UWB range error

distribution for the Pozyx rover on the validation dataset, and the

corresponding distribution after removing the bias estimated during the

calibration. The results show that the considered approach can

potentially be useful to reduce the effect of the systematic error on

the UWB measurements. However, this kind of approach can be used only to

reduce the effect of the static part of the environment, whereas the

effect of moving objects/persons is not removed. Since the simple

calibration model can be applied only on the same positions used for its

derivation, generalizations, based on bi-dimensional spline

interpolation and on machine learning, are under investigation.

Figure 10: Distribution of the range error for the Pozyx rover in the

validation dataset (left), and distribution of the error taking into

account of the estimated environment effect (right).

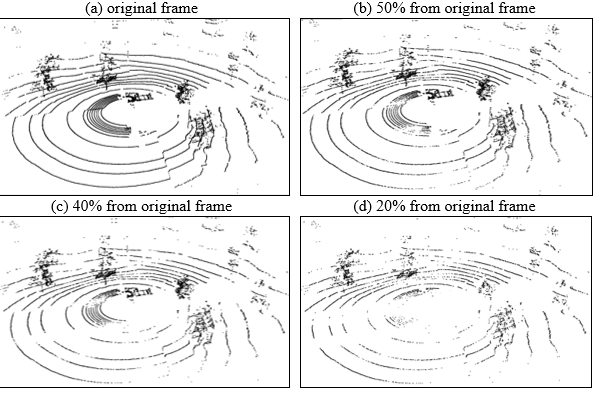

3.3 Velodyne LiDAR Data Reduction

As seen above measurements with various sensors were performed, among

others Velodyne LiDAR. Velodyne HDL-32 LiDAR generates up to ~1.39

million points per second, Velodyne VLP-16 LiDAR generates up to ~600

thousands points per second. Thus, using these sensors a huge volume of

data is acquired in a very short time. In many cases, it is reasonable

to reduce the size of the dataset with eliminating points in such a way

that the datasets, after reduction, meet specific optimization criteria.

A lot of frames from Velodyne LiDAR were obtained during the experiments

with millions of points. After pre-processing and georeferencing we can

prepare the 3D point cloud. Standard georeferencing of MLS data was

based on the transformation from the scanner local coordinates to global

coordinates using boresight parameters and navigation information from

the on-board GPS and IMU. The reduction can take place either on the

stopped frame, obtained directly from the Velodyne LiDAR measurement, or

can be performed on the entire 3D point cloud. For reducing the numbers

of points we can use the OptD (Optimum Dataset) method.

The OptD method for processing data from Airborne Laser Scanning and

Terrestrial Laser Scanning was presented in Błaszczak-Bąk (2016) and

Błaszczak-Bąk et al. (2017). The OptD method can be performed in two

variants: (1) with one criterion optimization called the OptD-single,

and (2) with multi criteria optimization called the OptD-multi. The OptD

method uses linear object generalization methods, but the calculations

are performed in a vertical plane which allows for accurate control of

the elevation component. Błaszczak-Bąk et al. (2018) outlined the

modification of the OptD method, with one criterion for Mobile Laser

Scanning data captured by Velodyne sensors (called OptD-single-MLS). The

OptD-single-MLS method is implemented in nine consecutive steps

described in Błaszczak-Bąk et al. (2018).

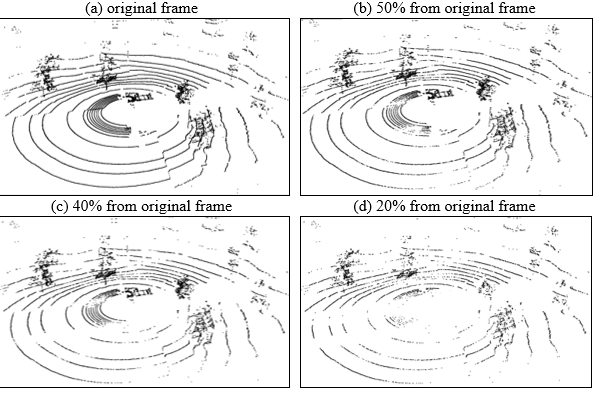

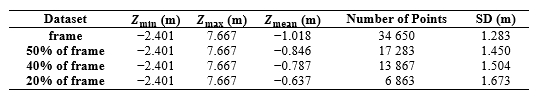

From the tests, the option 1 (with one frame) is presented in Figure 11.

The original dataset for Frame 1 and the derived datasets after

OptD-single-MLS reduction are characterized in Table 2. The OptD method

allowed keeping Zmin and Zmax values, the average value of the height in

the set will change and the SD parameter means the range of the height

of the measurement points in relation to the mean. SD will increase as

the number of points in the point cloud decreases. The OptD-single-MLS

method removes those points which do not have relevant effect on the

terrain characteristics from a practical point of view. The

OptD-single-MLS method provides total control over the number of points

in the dataset.

- original frame

- 50% from original frame

- 40% from original frame

- 20% from original frame

Figure 11: MLS data (a) original frame, (b) 50% of points after

reduction, (c) 40% of points after reduction, (d) 20% of points after

reduction.

Table 2. Characteristics of obtained datasets after the OptD-single-MLS

method for one frame

4. WI-FI INDOOR POSITIONING USING LOCATION

FINGERPRINTING

The vast majority of current indoor localization systems are designed

for sub-meter accuracy in position estimation, which is unnecessary for

most indoor navigation applications (see e.g. Pritt, 2013). Room-level

or region-level granularity of location is sufficient for most location

aware services (Castro et al., 2001; Chen et al., 2012; Jiang et al.,

2012; Jiang et al., 2013). RSS-based Wi-Fi fingerprinting is a typical

method frequently used for location estimation, since it does not need

any prior knowledge of Access Points (APs) deployment. The idea of the

fingerprint technology is to use online RSS measurements to match the

fingerprint database previously generated at every location in the

offline training phase. In the probabilistic fingerprint approach, a

model for the statistical distribution of the RSS for each different

location is built, based on the sample data collected during the

training phase. In the online phase, Bayesian inference is used to

calculate the probability that a user is at a certain location given a

specified observation, and estimate the most likely location of the

mobile device. The accuracy of the statistical distribution model

directly affects the final performance of the probabilistic fingerprint

positioning (Xia et al., 2017). Li et al. (2018) proposed a statistical

approach to localize the mobile user to room level accuracy based on the

Multivariate Gaussian Mixture Model (MVGMM). The proposed system is

designed to handle practical problems such as device heterogeneity,

signal reliability and environment complexity, thereby the users have no

basic knowledge about the base stations deployed within the environment

in advance. A Hidden Markov Model (HMM) is applied to track the mobile

user, where the hidden states comprise the possible room locations and

the RSS measurements are taken as observations.

The aim of the test is to build up the training database for a

probabilistic indoor localization system which can localize mobile user

with room level accuracy based on an University wireless network. The

test scenario consisted of three stages which are (1) calibration of the

smartphones, (2) training data measurements and (3) test data

collection. The calibration has to be performed to mitigate the RSS

variance problems due to the device heterogeneity. For that purpose,

static (stop-and-go mode of the smartphone CPS App[1])

observations are carried out where all devices collect 200 scans at

different locations simultaneously. This is followed by the training

data collection to be able to construct the fingerprint database for

each room in the indoor environment. Here the collection mode is static

while each user chooses different reference points in the rooms. Their

locations must to be randomly chosen and need not to be known, but they

need to be manually labeled with the room ID. In the final stage, the

test data is collected to track the user's trajectory to verify the

proposed system. In this case the collection mode is kinematic (dynamic

walking mode of the CPS App). In total, 11 kinematic walking

trajectories are carried out with the different smartphones.

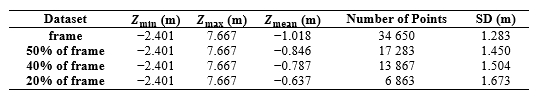

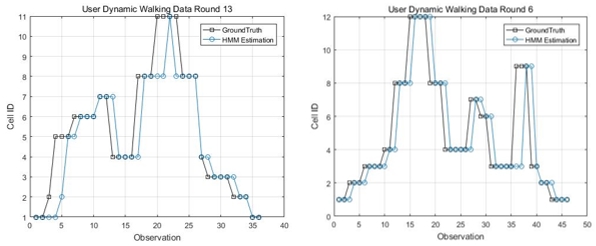

Figure 12 shows two examples of obtained trajectories of one smartphone

user. As shown in Li et al. (2018) the walking trajectories along the

reference points could be obtained with matching success rates of up to

97%. The MVGMM is efficient at approximating the RSS distribution for

each room that takes the signal correlations into computation. The

system obtained a reliable 93.0 % matching accu

racy for half of the trials. The performance was further improved to

97.3 % by introducing the conditional likelihood observation function,

which takes advantages of the unseen signatures of APs. Thus, the

proposed system demonstrated a practical prototype model of a reliable

room location awareness system in a real public environment. It can

handle the data uploaded by diverse devices and the noisy environment

(Li et al., 2018).

Figure 12: Examples of two kinematic walking trajectories.

5. CONCLUSIONS AND OUTLOOK

In the one-week benchmarking measurement campaign presented in this

paper, the main focus was led on CP of different platforms, i.e.,

vehicles, bicyclists and pedestrians, in GNSS-denied/challenged

in-/outdoor and transitional environments. An overview of the field

experimental schemes, set-ups, characteristics and sensor specifications

along with preliminary results including measurement data reduction, UWB

sensor calibration and Wi-Fi indoor positioning with room-level

granularity as well as user trajectory determination is given. It could

be proven that the test set-ups and employed sensors for the CP

localization of all involved sensor platforms – either if they are

vehicles or pedestrians – in the different test scenarios are suitable

and practicable. In the indoor environment, for instance, trajectories

of pedestrians walking around could be obtained with around 97% matching

success rate on average using Wi-Fi fingerprinting. In the case of UWB,

positioning is possible even better than on the decimeter-level. Further

data processing and analyses is currently in progress and the results

indicate significant performance improvements of users navigating within

a neighborhood. The extensive dataset is available from the joint

FIG/IAG Working Group.

REFERENCES

- Alam, N.; Dempster, A. G. (2013): Cooperative Positioning for Vehicular

Networks: Facts and Future.

IEEE Transactions on Intelligent Transportation Systems, 14(4), DOI:

10.1109/TITS.2013.2266339, pp. 1708-1717.

- Azim, A.; Aycard, O. (2012): Detection, Classification and Tracking of

Moving Objects in a 3D Environment. In Proceedings of the 2012 IEEE

Intelligent Vehicles Symposium, Alcala de Henares, pp. 802-807.

- Błaszczak-Bąk, W. (2016): New Optimum Dataset Method in LiDAR

Processing. Acta Geodyn. Geomater. 13, DOI:10.13168/AGG.2016.0020, pp.

379-386.

- Błaszczak-Bąk, W.; Koppanyi, Z.; Toth C. K. (2018): Reduction Method for

Mobile Laser Scanning Data. ISPRS International Journal of Geo-Information, 7(7), 285, DOI:

10.3399/ijgi7070285.

- Błaszczak-Bąk, W.; Sobieraj-Żłobińska A.; Kowalik, M. (2017): The

OptD-multi Method in LiDAR Processing. Meas. Sci. Technol. 28,

DOI:10.1088/1361-6501/aa7444075009.

- Castro, P.; Chiu, P.; Kremenek, T.; Muntz, R. (2001): A Probabilistic

Room Location Service for Wireless Networked Environments. In

Proceedings of the International Conference on Ubiquitous Computing,

Göteborg, Sweden, September29 - October1, pp. 18-34.

- Chen, Y.; Lymberopoulos, D.; Liu, J.; Priyantha, B. (2012): FM-based

Indoor Localization. In Proceedings of the 10th International Conference

on Mobile Systems, Applications, and Services, Lake District, UK, June

25-29, pp. 169-182.

- Geiger, A.; Ziegler, J; Stiller, C. (2011): StereoScan: Dense 3D

Reconstruction in Real-time. In Proceedings of the 2011 IEEE Intelligent

Vehicles Symposium (IV), Baden-Baden, pp. 963-968.

- Greenhalgh, J.; Mirmehdi, M. (2012): Real-Time Detection and Recognition

of Road Traffic Signs. IEEE Transactions on Intelligent Transportation

Systems, 13(4), pp. 1498-1506.

- Grejner-Brzezinska, D. A. (1996): Positioning Accuracy of the GPSVan. In

Proceedings of the 52nd Annual Meeting of The Institute of Navigation.

Cambridge, MA, USA.

- Hofer, H.; Retscher, G. (2017): Combined Wi-Fi and Inertial Navigation

with Smart Phones in Out- and Indoor Environments, In Proceedings of the

VTC2017-Spring Conference, June 4-7, Sydney, Australia, 5 pgs.

- Jiang, Y.; Xiang, Y.; Pan, X.; Li, K.; Lv, Q.; Dick, R.P.; Shang, L.;

Hannigan, M. (2013): Hallway Based Automatic Indoor Floorplan

Construction Using Room Fingerprints. In Proceedings of the 2013 ACM

International Joint Conference on Pervasive and Ubiquitous Computing,

Zurich, Switzerland, September 8-12, pp. 315-324.

- Jiang, Y.; Pan, X.; Li, K.; Lv, Q.; Dick, R.P.; Hannigan, M.; Shang, L.;

Ariel (2012): Automatic Wi-Fi Based Room Fingerprinting for Indoor

Localization. In Proceedings of the 2012 ACM Conference on Ubiquitous

Computing, Pittsburgh, PA, USA, September 5-8, pp. 441-450.

- Jozkow, G.; Toth, C. K.; Grejner-Brzezinska, D. A. (2016): UAS

Topographic Mapping with Velodyne LiDAR Sensor. In ISPRS Annals of the

Photogrammetry, Remote Sensing and Spatial Information Sciences, Volume

III-1, 2016 XXIII ISPRS Congress, July 12-19, Prague, Czech Republic.

- Kealy, A.; Toth, C. K.; Grejner-Brzezinska, D.; Roberts, G.; Retscher,

G.; Gikas, V. (2011): A New Paradigm for Developing and Delivering

Ubiquitous Positioning Capabilities. In Proceedings of the FIG Working

Week 2011 ‘Bridging the Gap between Cultures’, May 18-22, 2011,

Marrakech, Morocco, 15 pgs.

- Kealy, A.; Retscher, G.; Toth, C. K.; Hasnur-Rabiain, A.; Gikas, V.;

Grejner-Brzezinska, D. A.; Danezis, C.; Moore, T. (2015): Collaborative

Navigation as a Solution for PNT Applications in GNSS Challenged

Environments – Report on Field Trials of a Joint FIG/IAG Working Group.

Journal of Applied Geodesy, 9(4), DOI 10.1515/jag-2015-0014, pp.

244-263.

- Kim, S.-B.; Lee, S.-Y.; Hwang, T.-H.; Choi, K.-H. (2004): An Advanced

Approach for Navigation and Image Sensor Integration for Land Vehicle

Navigation. In Proceedings of the IEEE 60th Vehicular Technology

Conference, 2004. VTC2004-Fall. 2004, Los Angeles, CA, Vol. 6, pp.

4075-4078.

- Koppanyi, Z.; Toth, C. K. (2018): Experiences with Acquiring Highly

Redundant Spatial Data to Support Driverless Vehicle Technologies, In

ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial

Information Sciences, Vol. IV-2, pp. 161-168.

- Li, Y; Williams, S.; Moran, B.; Kealy, A.; Retscher, G. (2018):

High-dimensional Probabilistic Fingerprinting in Wireless Sensor

Networks based on a Multivariate Gaussian Mixture Model. Sensors, 18(8),

2602, DOI:10.3390/S18082602, 24 pgs.

- Maldonado-Bascon, S.; Lafuente-Arroyo, S.; Gil-Jimenez, P.;

Gomez-Moreno, H.; Lopez-Ferreras, F. (2007): Road-Sign Detection and

Recognition Based on Support Vector Machines. IEEE Transactions on

Intelligent Transportation Systems, 8(2), pp. 264-278.

- Pritt, N. (2013): Indoor Location with Wi-Fi Fingerprinting. In

Proceedings of the Applied Imagery Pattern Recognition Workshop (AIPR):

Sensing for Control and Augmentation, Washington, DC, USA, October

23-25, pp. 1-8.

- Retscher, G.; Kealy, A. (2006): Ubiquitous Positioning Technologies for

Modern Intelligent Navigation Systems. The Journal of Navigation, 59(1),

pp. 91-103.

- Septentrio (2018) PolaRx5.

https://www.septentrio.com/products/gnss-receivers/reference-receivers/polarx-5

(accessed September 2018).

- Toth, C. K.; Koppanyi, Z.; Lenzano, M. G. (2018): New Source of

Geospatial Data: Crowdsensing by Assisted and Autonomous Vehicle

Technologies, In The International Archives of the Photogrammetry,

Remote Sensing and Spatial Information Sciences, Vol. XLII-4/W8, 2018

FOSS4G 2018 Academic Track, August 29-31, Dar es Salaam, Tanzania.

- Xia, S.; Liu, Y.; Yuan, G.; Zhu, M.; Wang, Z. (2017): Indoor Fingerprint

Positioning Based on Wi-Fi: An Overview. ISPRS International Journal of

Geo-Information, 135(6), DOI:10.3390/ijgi6050135,

25 pgs.

BIOGRAPHICAL NOTES

Allison Kealy is a Professor in the School of

Science, Geospatial Science at RMIT University, Australia. She holds a

degree in Land Surveying from The University of the West Indies,

Trinidad, and a PhD in GPS and Geodesy from the University of Newcastle

upon Tyne, UK. Allison’s research interests include sensor fusion,

Kalman filtering, high precision satellite positioning, GNSS QC,

wireless sensor networks and LBS. She is the co-chair of the joint FIG

WG5.5/IAG WG4.1.1 on Multi-sensor Systems, vice president of the IAG,

Com. 4 on Positioning and Applications and technical representative to

the US Institute of Navigation.

Guenther Retscher is an Associate Professor at the Department of Geodesy

and Geoinformation of the TU Wien – Vienna University of Technology,

Austria. He received his Venia Docendi in the field of Applied Geodesy

from the same university in 2009 and his Ph.D. in 1995. His main

research and teaching interests are in the fields of engineering

geodesy, satellite positioning and navigation, indoor and pedestrian

positioning as well as application of multi-sensor systems in geodesy

and navigation. Guenther is currently the co-chair of the joint FIG WG

5.5 and IAG WG 4.1.1 on Multi-sensor Systems.

Jelena Gabela is currently working towards the PhD degree at The

University of Melbourne, Australia. Her research interests include

sensor fusion, integrity monitoring of multi-GNSS and cooperative

positioning. She received her BE and MS degrees in geodesy and

geoinformatics, from the University of Split, Croatia in 2014, and the

University of Zagreb, Croatia in 2016.

Yan Li is currently working towards the PhD degree in the department of

electrical and electronic engineering, The University of Melbourne,

Australia. She received her BE degree in the school of astronautics from

Northwestern Polytechnical University, China in 2011, the MS in the

center of autonomous systems in University of Technology, Sydney in

2014. Her research interests include wireless sensor networks and

inertial navigation.

Salil Goel earned his Ph.D. jointly from the University of Melbourne,

Australia and IIT Kanpur, India as a Melbourne India Postgraduate

Academy (MIPA) scholar in 2017. After working as a Research Fellow at

RMIT University, Australia, Salil joined IIT Kanpur, India as Assistant

Professor in June 2018. His research interests are sensor fusion for

mapping and navigation, LiDAR, Filtering and estimation and Unmanned

Aerial Vehicles.

Charles Toth is a Research Professor in the Department of Civil,

Environmental and Geodetic Engineering, The Ohio State University, USA.

He received a MSc in Electrical Engineering and a PhD in Electrical

Engineering and Geo-Information Sciences from the Technical University

of Budapest, Hungary. His research expertise include spatial information

systems, LiDAR, high-resolution imaging, surface extraction, data

acquisition, modeling, integrating and calibrating of multi-sensor

systems, 2D/3D signal processing, and mobile mapping technologies. He

has published over 400 peer-reviewed journal and proceedings papers, and

is the co-editor of the widely popular book on LiDAR: Topographic Laser

Ranging and Scanning: Principles and Processing. He is ISPRS 2nd

President (2016-2020) and ASPRS Past President.

Andrea Masiero has a Post-doc position at the Interdepartmental Research

Center of Geomatics of the University of Padua, Italy. His research

interests range from geomatics to computer vision, smart camera

networks, modeling & control of adaptive optics systems. He is currently

working on low cost positioning and mobile mapping systems.

Wioleta Błaszczak-Bąk is an Assistant Professor at the Institute of

Geodesy of the University of Warmia and Mazury in Olsztyn, Poland. She

received her PhD in 2006. She is conducting research on LiDAR point

cloud processing. She is an author of papers on big data optimization.

Vassilis Gikas received the Dipl. lng. in Surveying Engineering from the

National Technical University of Athens, Greece and the PhD degree in

Geodesy from the University of Newcastle upon Tyne, UK. Currently he is

a Professor with the School of Rural and Surveying Engineering, NTUA.

His areas of research are in sensor fusion and Kalman filtering for

navigation, engineering surveying and structural deformation monitoring

and. He is the chair of IAG Sub-Com. 4.1.

Harris Perakis is a PhD candidate at School of Rural and Surveying

Engineering of the National Technical University of Athens. He holds a

Dipl. lng. in Surveying Engineering from the same School (2013). His

scientific interests include positioning within indoor and hybrid

environments, trajectory assessment and geodetic sensor data fusion.

Zoltan Koppanyi is post-doctoral researcher at The Ohio State

University, USA. He received degrees in computer science, civil

engineering, and a MSc in Land Surveying and GIS Engineering. He

received his PhD in Earth Sciences at the Budapest University of

Technology and Economics. His research interests cover several fields of

navigation and mapping, such as navigation in GNSS-denied or corrupted

environments, LiDAR & image-based tracking, UWB positioning, sensor

fusion, bundle adjustment and dense reconstruction from images.

Dorota Grejner-Rzezinska is a Professor and Associate Dean for Research

at the College of Engineering, The Ohio State University (OSU).

She served as s Chair of the Dept. of Civil, Environmental and Geodetic

Engineering, and Director of the SPIN Laboratory, OSU. Her research

interests cover GPS/GNSS algorithms, GPS/inertial and other sensor

integration for navigation in GNSS-challenged environments, sensors and

algorithms for indoor and personal navigation. She published over 300

peer reviewed journal and proceedings papers and led over 55 sponsored

research projects.

CONTACTS

Dr. Guenther Retscher

Department of Geodesy and Geoinformation

TU Vienna – Vienna University of Technology

Gusshausstrasse 27-29 E120/5

1040 Vienna, AUSTRIA

Tel. +43 1 58801 12847

Fax +43 1 58801 12894

Email: guenther.retscher@tuwien.ac.at

Web site:

http://www.geo.tuwien.ac.at/

[1] Combined Positioning System App developed by

Hannes Hofer at TU Wien (see e.g. Hofer and Retscher,

2017).

Figure 7: Intersection level experiment. On the left: map of the

trajectory for 1 car. On the right: a photograph of the data collection

process and the experimental set-up.

Figure 7: Intersection level experiment. On the left: map of the

trajectory for 1 car. On the right: a photograph of the data collection

process and the experimental set-up.